Requirements

VertexAI API Permissions

- Go to Cloud VertexAI Library enable API

- Select the

GCP Project ID. - Click on

Enable APIwhich will enable the data catalog api on the respective project.

GCP Permissions

To execute metadata extraction workflow successfully the user or the service account should have enough access to fetch required data. Following table describes the minimum required permissions| # | GCP Permission | Required For |

|---|---|---|

| 1 | aiplatform.models.get | Metadata Ingestion |

| 2 | aiplatform.models.list | Metadata Ingestion |

Metadata Ingestion

GCP Credentials:

You can authenticate with your VertexAI instance using either

GCP Credentials Path where you can specify the file path of the service account key or you can pass the values directly by choosing the GCP Credentials Values from the service account key file.

You can checkout this documentation on how to create the service account keys and download it.

GCP Credentials Values: Passing the raw credential values provided by VertexAI. This requires us to provide the following information, all provided by VertexAI:service_account. To fetch this key, look for the value associated with the type key in the service account key file.project_id key in the service account key file. You can also pass multiple project id to ingest metadata from different VertexAI projects into one service.private_key_id key in the service account file.private_key key in the service account file.client_email key in the service account key file.client_id key in the service account key file.auth_uri key in the service account key file. The default value to Auth URI is https://accounts.google.com/o/oauth2/auth.token_uri key in the service account credentials file. Default Value to Token URI is https://oauth2.googleapis.com/token.auth_provider_x509_cert_url key in the service account key file. The Default value for Auth Provider X509Cert URL is https://www.googleapis.com/oauth2/v1/certsclient_x509_cert_url key in the service account key file.

GCP Credentials Path: Passing a local file path that contains the credentials.

Location:

Location refers to the geographical region where your resources, such as datasets, models, and endpoints, are physically hosted.(e.g. us-central1, europe-west4)Test the Connection

Once the credentials have been added, click on Test Connection and Save the changes.

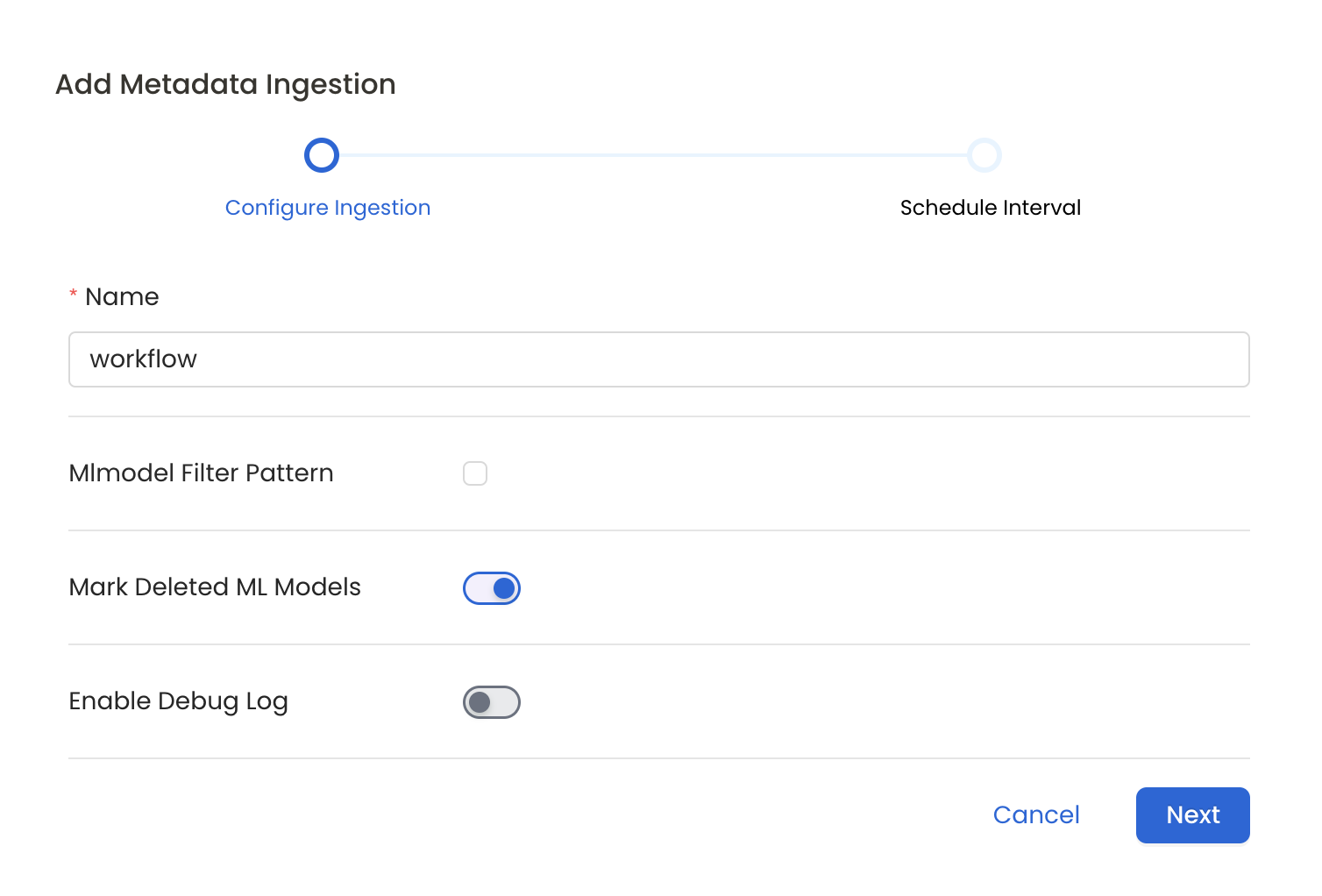

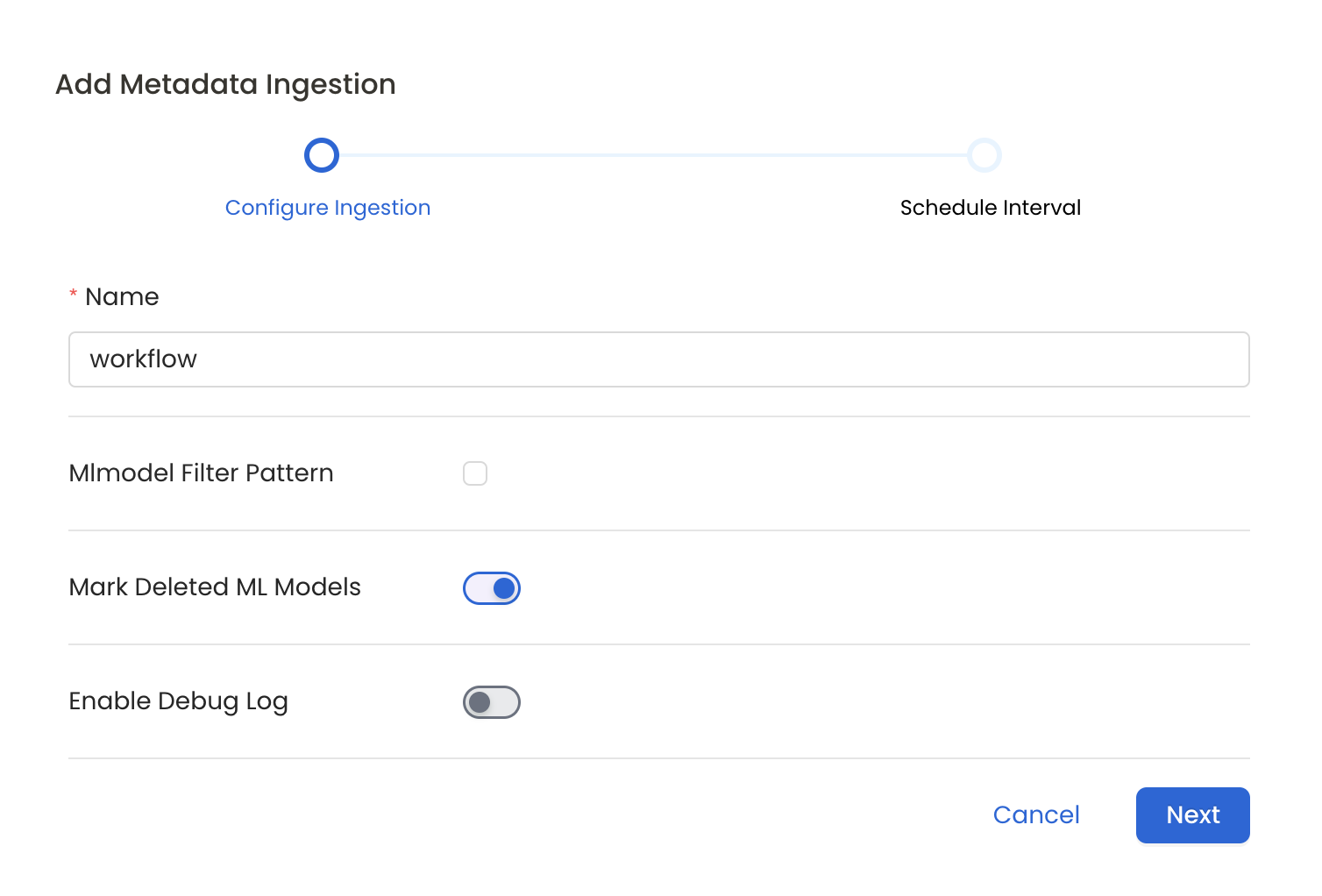

7. Configure Metadata Ingestion

In this step we will configure the metadata ingestion pipeline,

Please follow the instructions below

- Include: Explicitly include ML Models by adding a list of comma-separated regular expressions to the Include field. OpenMetadata will include all ML Models with names matching one or more of the supplied regular expressions. All other ML Models will be excluded.

- Exclude: Explicitly exclude ML Models by adding a list of comma-separated regular expressions to the Exclude field. OpenMetadata will exclude all ML Models with names matching one or more of the supplied regular expressions. All other ML Models will be included.

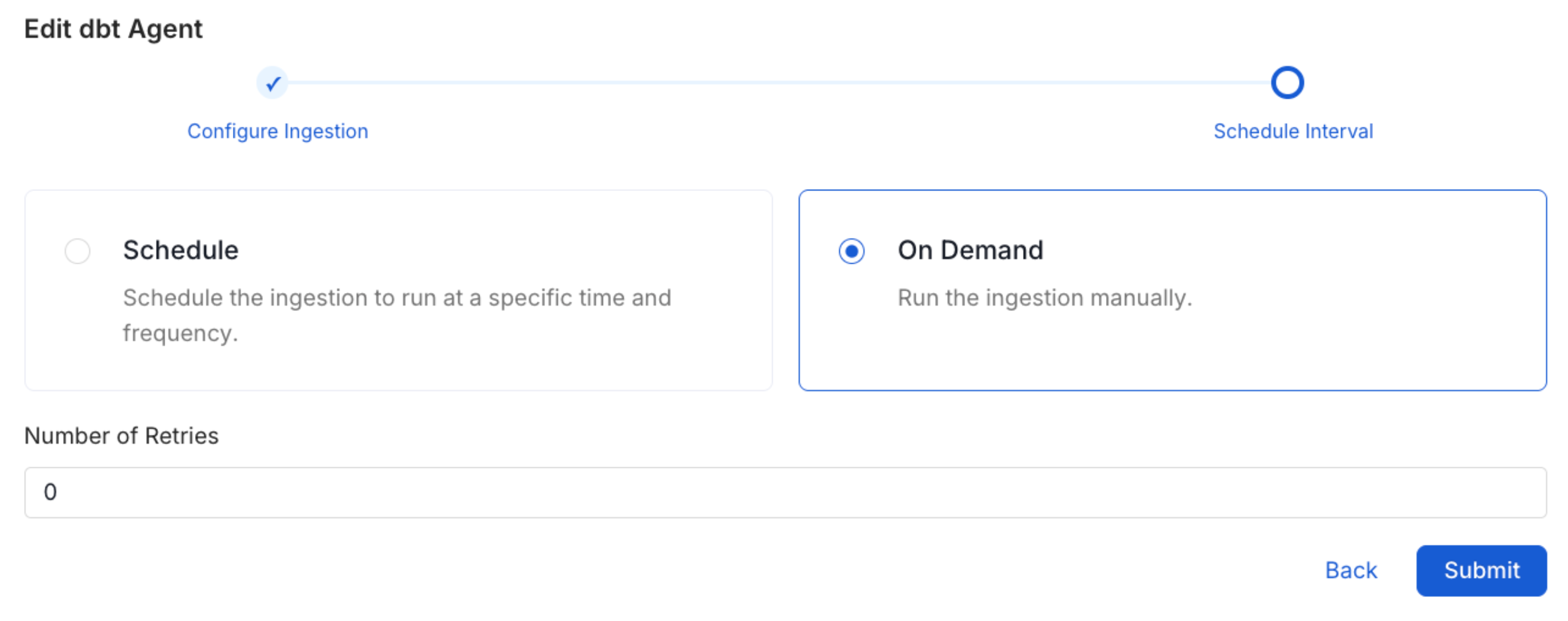

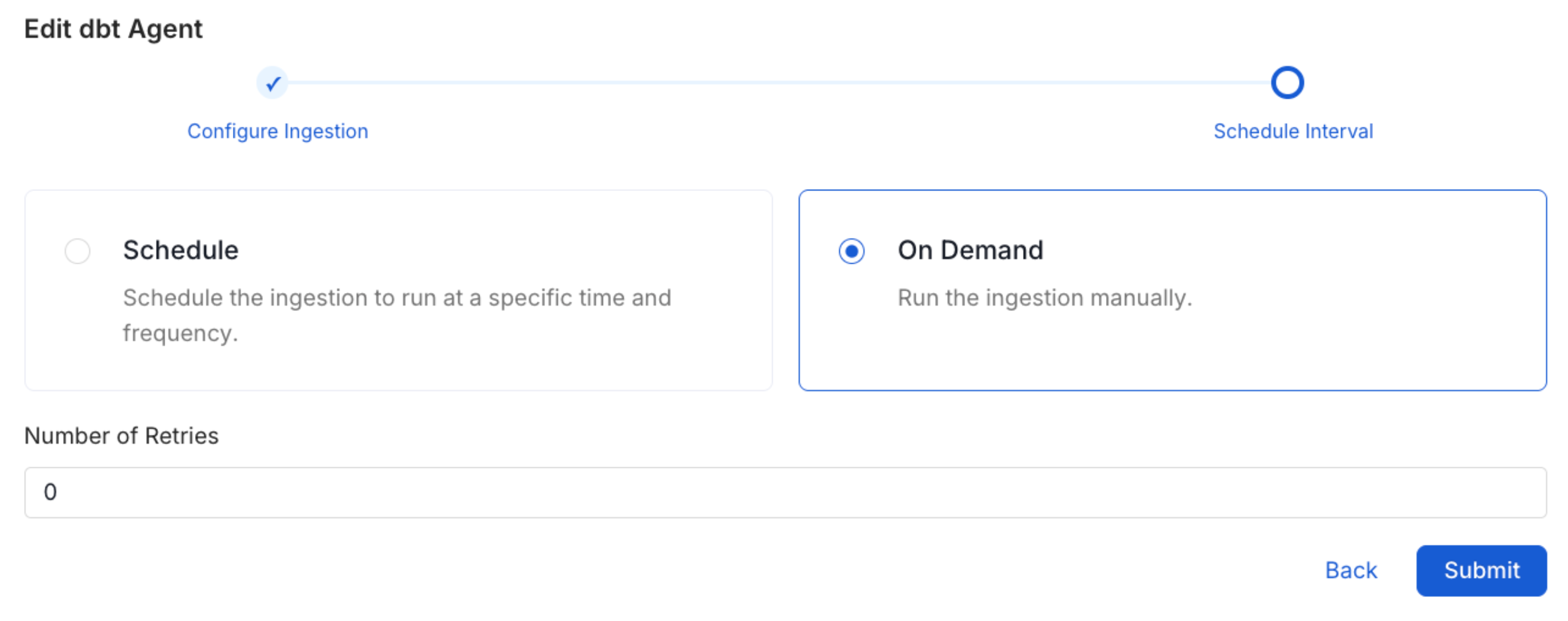

Schedule the Ingestion and Deploy

Scheduling can be set up at an hourly, daily, weekly, or manual cadence. The

timezone is in UTC. Select a Start Date to schedule for ingestion. It is

optional to add an End Date.Review your configuration settings. If they match what you intended,

click Deploy to create the service and schedule metadata ingestion.If something doesn’t look right, click the Back button to return to the

appropriate step and change the settings as needed.After configuring the workflow, you can click on Deploy to create the

pipeline.

Troubleshooting

VertexAI Troubleshooting

Learn more about how to troubleshoot common VertexAI connector issues and resolve configuration or ingestion errors.